A recent paper in PLoS Biology has caused a minor stir. In this paper, Pasley and colleagues show that you can find out which word a person has just heard by decoding activity in a specific part of the human brain. This is mind reading, in a sense. And it's therefore not surprising that some are talking about the Orwellian implications of this study, or speculate about the possibility of decoding inner speech in the same way.

But what did they Pasley and colleagues actually do? It's quite a technical paper, so you will have to forgive me if I have missed a few details. But the general idea behind the study is straightforward.

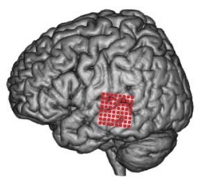

Pasley and colleagues recorded directly from the brain of human participants. Normally this is not possible, because intra-cranial recordings are highly invasive. You have to open up the skull in order to attach recording equipment to the brain. Few participants will agree to this, and even fewer ethical commissions will condone it. But sometimes, when a willing participant is about to undergo brain surgery (usually for a severe form of epilepsy), scientists get the unique opportunity to do this kind of experiment with humans.

The brain area that Pasley and colleagues recorded from was the posterior superior temporal gyrus. This area is traditionally thought of as a midway station in the transformation from low-level acoustic information (sounds without meaning attached to them) to conceptual representations (the meaning of words, concepts, etc.).

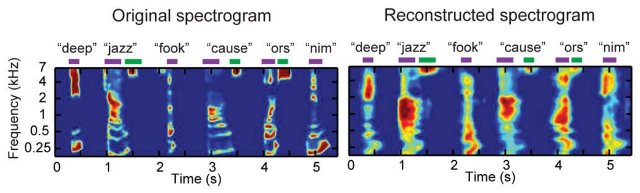

The neurons in this brain area are tuned to specific frequencies. Some respond to high-pitched sounds, some to low. Because of this property, you can determine the frequency profile of the sound that is currently being listened to by recording from many neurons simultaneously. If you do this over time, you get what is called a spectogram.

Every word has a characteristic spectogram. So what Pasley and colleagues did is the following: They a constructed a spectogram based on the activity of the neurons that they were recording from, and compared it with a database of real spectograms of words. Next, they simply selected the word with the spectogram that best fitted the constructed spectogram. And they were correct about 90% of the time! That is, in about 90% of the cases, based solely on brain activity, they correctly determined which word was being listened to.

Figure adapted from Pasley et al. (2012)

Amazing, right? But when you know the details you realize that, amazing though it may be, it's not magical and certainly a far cry from decoding inner speech—real mind reading. The process is more akin to automatic text recognition (but way cooler).

The first caveat is that the high level of accuracy was apparently achieved using a small database of 47 words. The correct word was always one of these 47, which kind of narrows it down. For real mind reading, you would have to select from an extremely large list of possible words. This is much harder.

Also, the decoding process relied on low level properties of sounds. More abstract thought process are much harder to decode from brain activity than simple ones. For example, determining from neural activity whether someone is looking at a rightwards or leftwards tilted grating is, by today's standards, pretty easy. Determining whether someone is looking at some specific scene is much harder. Determining which childhood memory someone is currently visualizing is, well... not within the realm of possibility just yet.

Not to take anything away from the achievement of Pasley and colleagues, because this is science at its best. But let's not get ahead of ourselves. I'd be amazed to see real mind reading happening within my lifetime.

References

Pasley, B. N., David, S. V., Mesgarani, N., Flinker, A., Shamma, S. A., Crone, N. E., Knight, R. T., et al. (2012). Reconstructing speech from human auditory cortex. PLoS Biology, 10(1), e1001251. doi:10.1371/journal.pbio.1001251 [Full text: Open access]